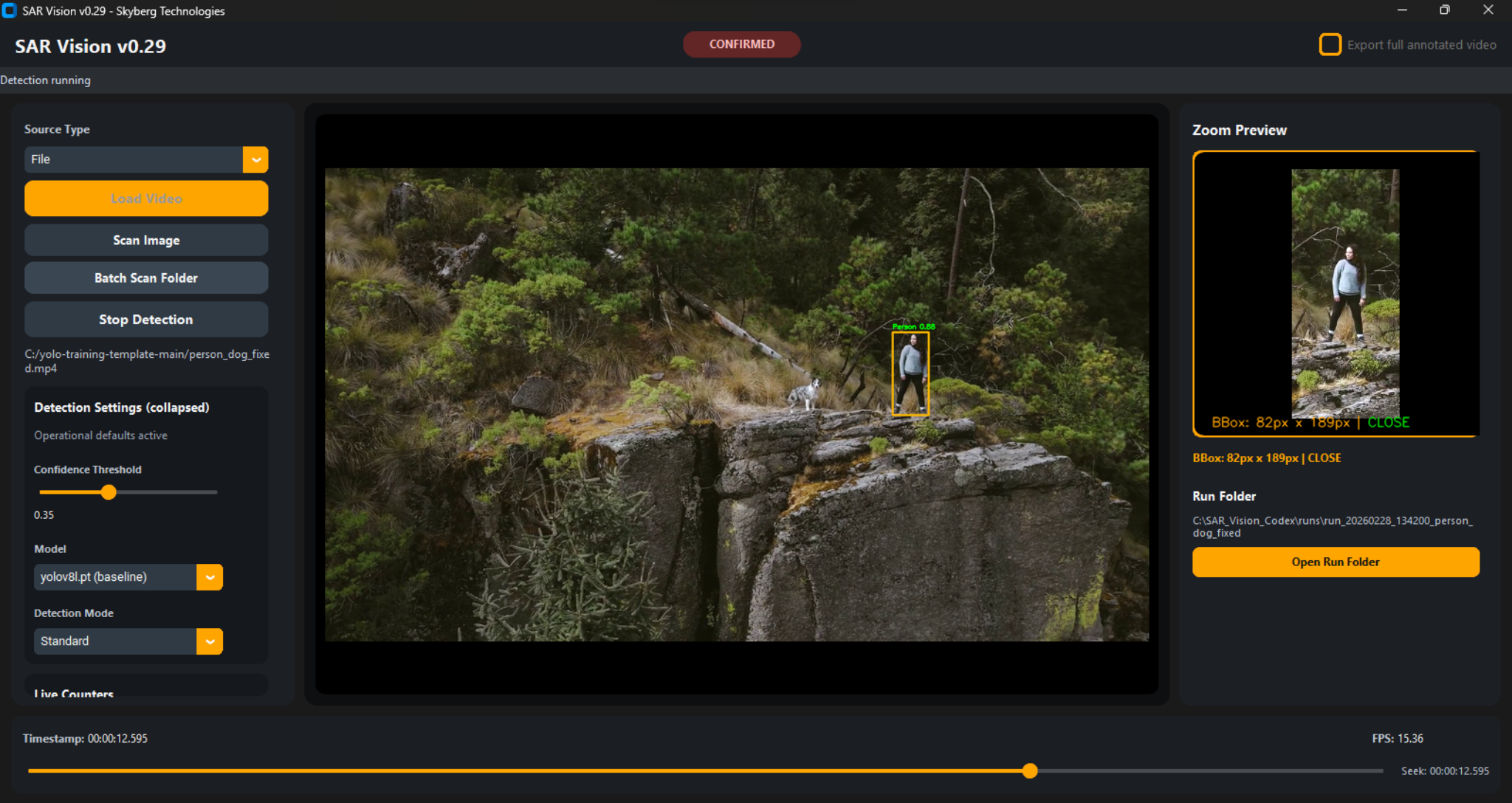

Illustrative Recall Comparison (Conceptual)

Precision

Secondary objective

Recall (Tuned for SAR) — High

Primary objective

Recall (General-Purpose Models) — Reduced in aerial contexts

Untuned baseline

False Positive

Team investigates flagged area. No subject found. Cost: bounded time for investigation.

False Negative

Subject is in frame. System does not flag. Team continues past. Cost: potentially mission-critical.

Illustrative comparison based on internal evaluation datasets. Not independently validated.

The Model Is Calibrated

to Flag, Not Filter

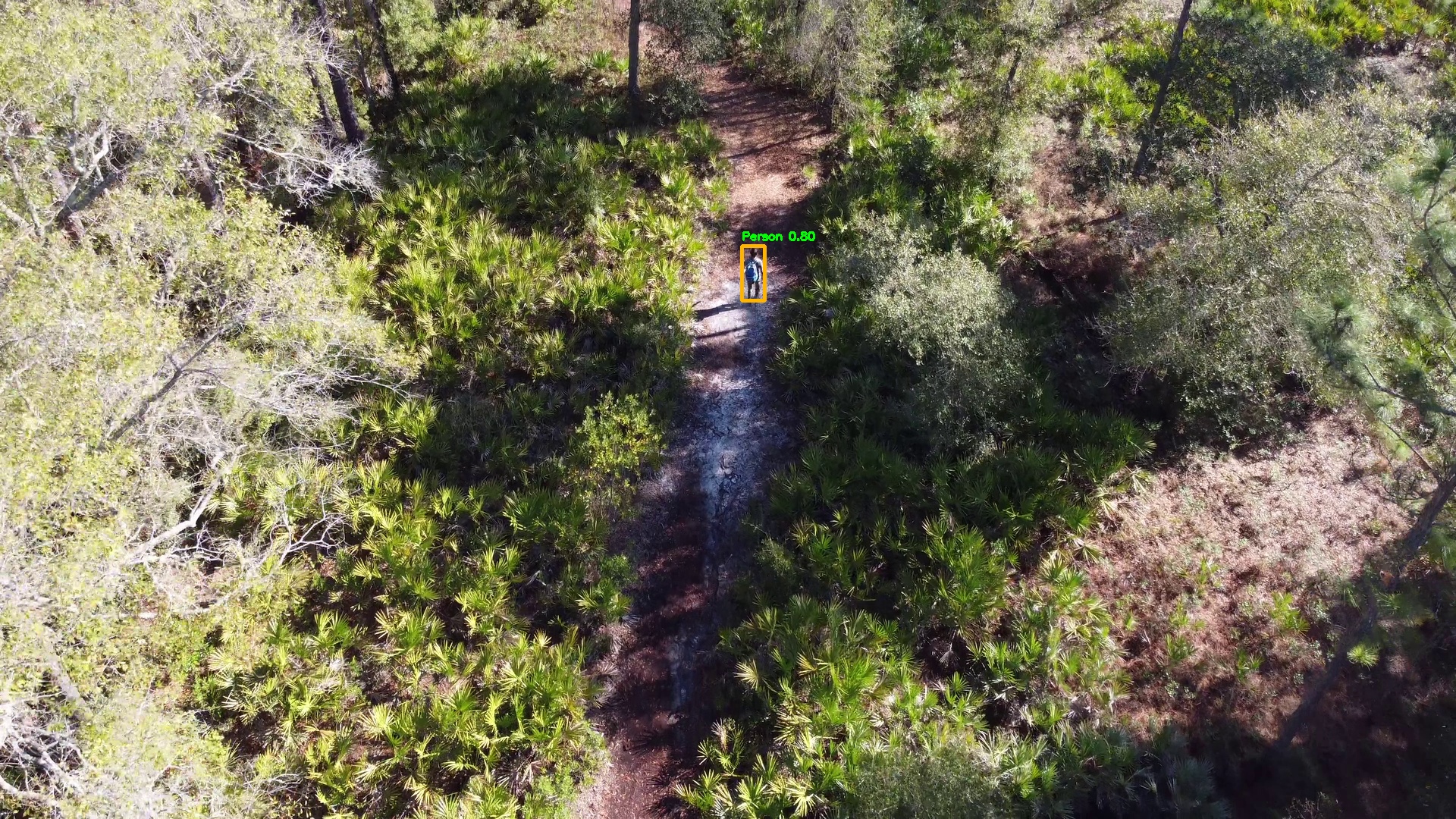

Standard detection benchmarks weight precision and recall equally, or optimize toward precision because false positives carry a higher perceived cost. In SAR operations, that optimization is incorrect.

SAR Vision is configured to surface all contacts the model considers plausible, including low-confidence candidates.

Confidence thresholds are configurable, but defaults are intentionally conservative. Low-confidence detections are presented to the operator for assessment rather than suppressed before review.

The system aims to improve the likelihood that plausible contacts are not filtered before a human evaluates them.

This approach is reflected throughout the detection pipeline — from model training to post-processing — all oriented toward SAR context rather than general benchmark performance.

Design Principle

SAR Vision surfaces contacts. It does not adjudicate them. Every flagged detection requires human verification before any operational action. The system provides decision support; the operator retains decision authority.